I recently saw a blog post by one of the Federal CIO’s. I can’t argue with their observations, though I think we may disagree on how to tackle the problem. That CIO is going to post their direction in future posts. I’m going to take a shot at my own direction in this post.

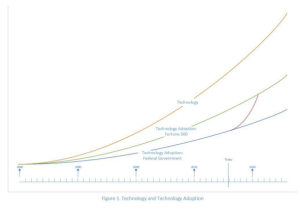

The following graph demonstrates that the US Government IT is falling behind Fortune 500 firms and way behind internet startups.

Federal CIO study graph

I remember having this debate with an IBM General Manager years ago when he was considering outsourcing some operating system components thinking that all programmers are created equal. There is a huge difference in maintaining a legacy of millions of lines of code vs. starting from scratch with something new. As important, starting over AND maintaining all the rules and regulations of the legacy, is also a very difficult proposition. It takes pre-existing knowledge for success.

This CIO faces a problem that is similar to many other businesses. It’s true for mainframes as it will be for Microsoft Windows and Linux systems in the future. There are millions of lines of “legacy code” in languages that are less popular today than they will be in the future. The inference is to move away from the legacy code toward a modern language where there are more skills available. As a factoid, there are more ARM chips in the market today than Intel chips. There are more applications being developed for iOS and Android than for Microsoft Windows and that’s way more than being developed for mainframes. So that might lead someone to believe that’s the programming model of this generation. And as I’ve said in an earlier post, if your IT career began in the 1990’s and you hated mainframes, you were right….at that time….

But like everything, time changes things. IBM and vendor partners have dramatically changed what the mainframe was into a more modern computing environment. IBM spends over $1B in R&D for each generation of the mainframe that comes out about every two years now. I’m going to park that, for a moment, to go to another topic, that is more relevant to the skills discussion.

Patterns

Programming is about patterns. Patterns occur at a process level, in languages and in behaviors. There are three broader patterns at work here. Systems of Record, Engagement and Insight. I’ve written about that before, but Record deals with transaction processing, Engagement deals with the end user interface and Insight is about analytics. Most programming being done today is around systems of engagement – taking advantage of enhancements in smart phone, wearable tech (e.g. watches and fitness) and other devices that are the Internet of Things. GPS, accelerometer, touch, voice and biometrics are just a few of the advances that improve the human computer interface. The mainframe has avoided this programming area completely as a native interface. That makes complete sense. Ignored by many, though, is the fact that the mainframe has fully embraced leveraging those capabilities through interoperability and standard formats and protocols. They enable hybrid programming to reach out to those interfaces to simplify the deployment of systems of Record. In addition, they’ve integrated with Systems of Insight to enable real time analytics to be applied to traditional systems of Record to reduce risk.

This link will take you to a tremendous video about the z13 server and its ability to satisfy these new capabilities. Warning – it’s 30+ minutes long.

Where will the skills come from?

Another fear raised is that schools no longer teach “mainframe”. Perish the thought. While there are fewer “mainframe” schools than teach commodity system programming, there are a wealth of schools across the world that are part of IBM’s System z Academic Initiative. Checking their website, there are three in Maryland, close to the Federal government and very close to the agency head writing the blog. But you know, “you can’t trust the marketing” materials put out by a vendor. So I went to the Loyola College of Maryland, University of Maryland Eastern Shore (UMES) and Prince George County Community College web sites to see what they said about the IBM Academic Initiative. Honestly, the info I found was from 2011-13, other than Prince George which was up to date. So I reached out to the schools. UMES responded quickly.

“First and foremost, I would like to inform you that we are actively involved in the IBM Academic Initiative. Dr. Robert Johnson is the Chair of the Department of Mathematics and Computer Science is the lead person in the initiative. Further, they are currently in the process in moving into our new $100 million Engineering and Aviation Science Building which will significantly enhance our capabilities to support the initiative.”

Here’s a brochure for their program.

Most importantly, success is not a two-way street between IBM and the schools. It’s four way, including businesses/agencies and the students. The best schools will work with businesses to provide internships with students PRIOR to graduation. There is generally a very high (close to 50%) success rate in those students choosing full-time employment at the business they did an internship. I strongly encourage any business or agency concerned about future skills deployment to reach out to these schools and work directly with them. Experience shows that you’ll be very pleased with the results. UMES gave me their cell numbers if you’d like to reach out to me for a direct introduction.

Adopt New Technologies and dump the old?

The collective wisdom of the Federal CIO’s seems to point to new technologies as the “future” of programming. The referenced blog points to Uber, Siri and Facebook as examples of such applications and suggests they may be irrelevant in five years. (See Myspace as an example). New technologies grow up in a vacuum. There is no maintenance legacy. It doesn’t mean the legacy can’t work with them, though. A prior blog entry looks at 22 emerging technologies and their relationship to the mainframe and how hybrid computing can solve new business problems.

Let’s consider one of the new, cool tech referenced: Uber. I happen to have a chauffeur’s license (a story for another time) and am very familiar and active with Livery legislation. The Uber mobile application is actually very simple and easy to recreate. What makes them successful is their business model and practices. They hire drivers as contractors, therefore no tax consequences for Uber. They avoid the bureaucracy of Livery laws.

There is a state law that enables the New York City Taxi and Livery Commission (T&LC) to regulate who and what can be operated within the boroughs. This is for the “safety and comfort of passengers”. However, it’s big money. Medallions, per cab, have cost up to $750,000 just to put a car on the street and the T&LC limits the number of medallions. Cars from outside the T&LC are not allowed to make more than one stop in the city. They cannot pick up a passenger at an airport if they dropped them off more than 24 hours ago. The T&LC have 250+ officers in unmarked vehicles that follow and intimidate non-T&LC livery vehicles in the city. I witnessed a stretch limo being impounded by the T&LC when an upstate Livery firm dropped off the passengers returning from a NYC funeral at a NYC restaurant before traveling north. The second stop was illegal. In any event, other states (CT and NJ) got upset with this bureaucracy. They lobbied and a Federal law resulted to allow reciprocal rights to other states to operate without joining the T&LC. But upstate Livery can’t participate. The NY Assembly and Senate have had to modify laws to create T&LC’s in neighboring jurisdictions to allow reciprocal rights in NYC locations. Rockland, Nassau and Westchester counties have T&LC’s now. This is the third year that Dutchess and Ulster have legislation to enable reciprocal rights up for a vote. The NY Assembly has passed their legislation, but the NY Senate hasn’t. Last year, they decided to wait on Dutchess and Ulster until they figured out how to allow Uber and Lyft to operate in NYC exempt from the T&LC bureaucracy. That legislation has now been created and will be voted on soon.

T&LC makes revenue on selling taxi medallions and collecting tax on fares. Uber & Lyft disrupt those economics. The livery vehicles pay $3000 per year for insurance. Uber/Lyft cut deals with insurance companies to lower that to $600/year to make them more competitive. The drivers must also have personal insurance on the cars when a fare isn’t present. Laws are now being enacted to allow “Transportation Network Companies” (TNC as they generically refer to Uber and Lyft) to get “fair access” to markets in NY without this bureaucracy. I’ve developed an app which will qualify the “local” livery company to operate as a TNC to reduce their costs and in turn, reduce the cost to consumers…will the government allow that? Will the Dutchess and Ulster laws pass? This is more about big money, venture capital and paid lobbyist getting to the legislative leaders, than the small livery companies trying to stay in business. We’ll see if the legislation and the bureaucracy will enable the small livery services to morph into a mini-Uber. The legislation enables the Commissioners of Insurance and Motor Vehicles to regulate the “TNC” businesses. The legislation doesn’t prescribe how that will be managed nor how much it will cost. By the way, did you notice that the legislation for Uber includes a lighted icon in the front and rear of the car to identify it? That’s as much for passenger safety as it is to make it easier for the T&LC police to pull over the cars if the legislation doesn’t pass. Not much likelihood of that, though, given the amount of money changing hands in Albany.

Long story short – Uber is more about business processes than it is about new applications.

Past Technology Evolution Examples

Going back to the graph, there is much to learn from prior experiences of the Fortune 500 and government agencies introducing new technology.

Learn from the Fortune 500 – the good:

Benefits processing: Hewitt Assoc and Fidelity continuously advance their capabilities. They provide integration with employer payroll systems. They have up to the minute accuracy of consumer records. They provide immediate access to Accruals and eligibility. They’ve adopted web and mobile technologies as Systems of Engagement, including biometric security authentication.

Claims processing: Travelers Insurance has historically reduced IT and people expense 10% annually while improving response times. Claims agents leverage mobile technology for accidents and disasters as input to “legacy” systems.

Learn from the government – the good:

The FBI and VA leverage mainframe virtualization to avoid IT costs of millions of dollars over commodity systems, while improving security, resilience and service level agreements. They run the same code in a different container with a superior operations model and lower costs.

All of the above use Hybrid technology which includes the mainframe.

Learn from the government – the bad:

Marine Corps – hosted by an IT supplier that gouges them on mainframe costs – three times the amount if they hosted it themselves. The IT supplier takes floor space, energy and cooling costs for an entire data center and only bills to the mainframe users. The IT group claims: Commodity systems wouldn’t be affordable if they were “taxed” with those costs. That’s why understanding the Total Cost of Ownership is a critical success factor when considering mainframe vs. commodity system costs. Unfortunately, regulations are in place that mandate that the Marine Corps use that particular IT Supplier. Other groups have bucked that policy to save money.

US Postal Service was not competitive with package tracking vs UPS and FedEx. They realized they needed to add new applications and wanted modern programming to do it. It included new engagement systems at the delivery vehicles via mobile technology. ….that’s the good. The bad – they spent $100’s of millions on redundant “commodity” IT infrastructure and copied key data and applications from the mainframe in order to host the new applications, while leaving the mainframe running. Testing and benchmarking have demonstrated that adding the new applications to the existing mainframes would have avoided millions in costs and operations complexity, while simplifying the architecture and improving SLA’s. With package shipping volumes increasing annually, they’ve continued to upgrade the mainframe each year. They are just spending too much overall. While they collaborate between the systems by moving data, they could save more if they shared the data in real-time.

Prescription for change

While a prescription for change is forth coming in the CIO’s future blogs, let’s hypothesize some changes for their benefit.

Modernization of the development environment

Rational tools – They move the mainframe application development to commodity systems. This moves 80% of the development off the mainframe to reduce IT costs. They provide tools to modernize and document the “legacy” applications and simplify their maintenance. They provide seamless test to the mainframe and other platforms of deployment choice. One large business has 1000 Java developers for commodity systems, 400 Cobol programmers for the mainframe and 50 developers familiar with Java and Cobol to enable hybrid programming and integration. All use the same Rational development front end. From a skills perspective, the mainframe development can now look and feel exactly the same as development on commodity systems. This eases the skills and knowledge requirements to start.

Language modernization:

Cobol Copybooks – the means to define data structures – are now sharable with web services and those services can launch from Cobol. More on that in a moment.

Chip Speed

The System z13 server runs dual core 5GHz processors. Benchmarks show that Java runs faster here than any other platform. The video referenced earlier provides specifics. With direct access to databases and files, business applications can have better performance than other architectures. With fault tolerance and an improved hardware and software security architecture, the result is a very price competitive hosting environment for new workloads.

Risk and Fraud analytics

Financial services businesses are doing real-time analytics in the middle of their System of Record transaction programs to assess risk and avoid fraud. Leveraging the Copybook capability, they can call out to leverage the 1000+ processors in the IBM Data Analytics Accelerator (IDAA – formerly Netezza) that have been tied into the mainframe to speed time to resolve.

Callsign – a biometric authentication and fraud prevention technology, can leverage a modern smart phone to identify the owner/user of the device before they actually answer a challenge – which could be a finger print, facial recognition or voice. Using the accelerometer in the phone, the GPS and pressure points on the touch pad, along with historic behavior patterns, Callsign can tell by the way a person is holding a phone if it’s the original user or someone else before offering them the authentication challenge. This type of technology can be used at kiosks in regional/branch offices to enroll users and make sure they are the real person requesting later service. No need for a card. A unique user id is sufficient to provide authentication. True, many low-income users/beneficiaries may not have smart phone capability. Alternative mechanisms can be deployed for challenge/response authentication. But, maybe providing a low-cost device to beneficiaries for this purpose, a more modern version of the “RSA token devices”, might reduce overall costs for low-income users. Watch this space. One of the Callsign customers, a large credit card processing bank, is calling out to Callsign from a “legacy” mainframe transaction program to authenticate that the real customer is at the point of sale or ATM device requesting service. Compare that to an experience I had recently. Visiting 500 miles from home, I went to a big box department store and paid with a valid credit card. Everything was good, but the transaction was denied. I then used a debit card, same bank, same credit card service, but used my pin code. The transaction was approved. As I walked out of the store, I got a call from the credit card provider asking me if I just attempted to use the card. They restored my card to service immediately. Use of the Callsign capability eliminates the human intervention, lowers my embarrassment and speeds transaction processing.

Going a step further, Callsign runs on Amazon Web Services (AWS) or a private cloud today. This is a distributed connection to the transaction systems calling out to it. There are about 15 “risk tests” that can be done, but typically just three can be done and the results fed back to make a risk decision in the time allowed for a transaction to complete. We’ve hypothesized that if Callsign was running on a mainframe, with a memory connection to the transaction programs, that 10 risk tests could be done on the mainframe and maintain the service level agreement of the “legacy” transaction programs. Stay tuned for future updates in this pace.

The NSA has proven that leveraging a Google like search capability can help stop attacks. Why not use web crawling software to look for fraud and overpayments? Leveraging online obituary information, an insurance company or benefits providers could determine if a person has died and no longer eligible for services. In addition, it can predict the services that may be available to the survivors of that person. This can speed up time to deploy payments to their survivors. These web crawlers can feed a data warehouse searching for fraud but also feed real-time systems to avoid fraud for new transactions.

Collaboration is necessary to move forward:

Education: partnerships between vendors, businesses/agencies and schools is necessary to create the next generation of IT professionals (programmers and operations) as well as to update the skills of existing personnel.

Operations: Today, fiefdoms around individual architectures or administrative domains exist that create/foster conflict and drive up IT costs. Not everyone is going to get along. Organizational politics and budgets have as much to do with fiefdoms as anything. Leveraging the Rational developer example, where a small group of people have some hybrid responsibility, can lead to breakthroughs in processing schemes.

Legislation: Where necessary, this can be valuable to enable a leap toward something new that will provide value and reduce costs.

Summary

There is no right or perfect answer to any IT decision. As the saying goes and leading to an unintended consequence: “Throwing the baby out with the bathwater” isn’t necessarily a good approach. Leveraging a hybrid computing, operational and development environment can make a large shift toward leveraging “modern” application models. Happy programming!