Last week, I wrote about organizational fiefdom’s and how they can inhibit efficiency in deployment models.

This week, I’ll describe a couple of data patterns that are common across several business models. Most important, they can take advantage of a hybrid deployment model and some unique System z characteristics that can result in a dramatic reduction in operational and security overhead and simplify compliance to a wide variety of government, industry and business regulations. It’s all based on a shared data model and collaboration across end to end technologies.

To me, there are three critical business oriented data operations: update a record, read a record and analyze a collection of records. There are also management operations: backup/archive, migration/recall and disaster recovery. I’m going to focus on the business oriented aspects for this post.

Let’s consider three different scenarios. A national intelligence operation that is processing satellite and other electronic information. A health care environment that processes medical records and data from medical devices. And a Criminal database containing wants, warrants and criminal records.

Each of these has a data ingest process that comes from individuals or individual devices. Satellite data is beamed to earth, typically to an x86 based server and then transmitted and loaded into a “System of Record” which might be considered the master database.

Medical records can be updated by a medical professional and patient via an end user device or portal and input can be received from medical devices e.g. EKG, MRI, XRay, etc. All of this information is then loaded into a master database.

BOLO’s (Be On the Look Out), Criminal Records, Wants and Warrants are input by various police agencies and transmitted to a master record database that can be accessed by other police departments to see if someone they’ve stopped or is in custody may be wanted by other jurisdictions.

Each of these scenarios has something special about them – a need to know. A doctor or nurse can’t “troll” a medical database looking for any data. That’s against HIPAA policy. They should only be looking at records associated with patients they are working with.

Intelligence analysts may only be able to see certain satellite or ELINT (electronic intelligence feeds) based on their security clearance.

Police in one jurisdiction cannot query or update records in other jurisdictions unless they are pre-approved for a particular case.

This Need to Know can also be called Compartmentalization or labeling of data. DB2 on z/OS has technology known as Multi-Level Security that allows data to be hidden from users and applications that don’t have a need to know. The best part of this technology is there doesn’t need to be any change to an application. The need to know criteria is established between security administrators and database administrators. As a result, when multiple users attempt to query an entire database, if they are in different compartments they’ll get completely different result sets without knowing the full breadth of the database.

So let’s look at a Medical System that has multiple hospitals scattered across a broad geography. Each hospital has specialty areas: Orthopedics, Oncologists, Pediatrics, etc. There is a Primary Care Physician (PCP) for each individual patient. There is the Patient. There are a bunch of different medical test devices: MRI, CTScan, EKG, etc. At some point, a Patient Care Record is created. A PCP is identified to that Patient. They may order tests on behalf of the patient. Test data is captured, stored and linked to the patient’s record. A Cardiologist may see and annotate information associated with the EKG. Any other Cardiologist at the hospital may also see that EKG and annotate it. An Orthopedic surgeon may look at it, but not annotate it. A doctor at another hospital may not even know that the patient exists unless they are invited to look at it by a peer or via the patient requesting a second opinion.

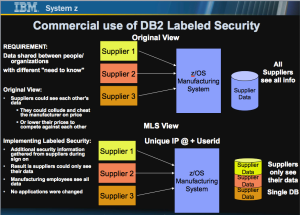

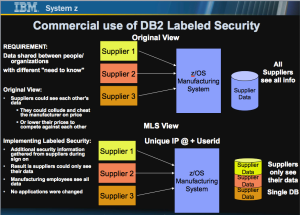

The following diagram shows a Manufacturing business that gets a variety of “parts” from different suppliers. They allowed each of their suppliers to check the on hand inventory to allow for continuous manufacturing and improve the supply chain operations. The unintended consequence of this implementation was that each supplier could see another suppliers’ inventory and price per unit. As a result, devious suppliers could undercut the competition or worse, collude with their competitors to raise prices.

By turning on the labeled security capabilities of DB2, the suppliers can only see their records. Employees of the manufacturing company can see all the records in the database. No applications were changed. The manufacturing company had to collect some additional security information for each supplier in order for this to work properly. You’ll notice the inclusion of internet address (IP @) as a security context. The manufacturer can “force” supplier updates to come from the supplier’s site. It will not allow a supplier’s employees to logon from home, for example. This could help inhibit a rogue employee of the supplier from compromising the Manufacturer’s database.

There are other examples of production systems leveraging MLS capabilities. Lockheed Martin has been operating a secure environment for multiple agencies for many years. This has been for the National Geospatial Intelligence Agency (NGA) and its mission partners.

But here’s another important distinction from other models. The data operations are somewhat like the Eagle’s song Hotel California:

“We are programmed to receive, You can check out anytime you like… but you can never leave”.

That means if you are viewing the data, you are viewing the “System of Record”. Where you are viewing from is called the “System of Engagement“. By definition, the System of Engagement can overlay and complement the System of Record by transforming it. This is can be a stateless entity or read only. The XRay image, stored in a database, is just a collection of bytes. The end user may not have the proper viewer installed on their desktop. The System of Engagement will transform the image into something consumable and recognizable by the end user. The Hospital doesn’t make a copy of the data and transmit it to other service providers. If they did, they’d have to ensure that those new data owners of the copy adhered to the same stringent privacy laws for which they are accountable. This becomes a logistics nightmare. Instead, the “user” being a patient or medical professional accesses a program (The System of Engagement), which could be a virtual desktop or web service, which in turn accesses the database and remotely presents the requested data to the end user. This is more of an image as the end user device is considered stateless. No local copy is saved. Because this is solely a remote presentation of the information, that device can be exempt from Privacy audits because it is understood that no local copy is made. This doesn’t address an end user taking a picture or writing down information associated with the record being processed. There are other products that can be deployed to capture these breaches of privacy policy. I can cover that in a later post.

Compartments can be created that contain only a subset of the stored records, similar to a view. So analytic processing might be done across all database records, looking for patterns, fraud, opportunities, etc, but without including protected personally identifiable information. For example, disease outbreaks by region, trends, risks, etc, but again, this analysis may only be done by someone with a need to know.

Look here for a video associated with Intelligence Analysts dealing with Satellite transmissions and leveraging this workflow.

It may be hard for some of you, but imagine the different satellites are actually medical devices. Imagine the different compartments are associated with various hierarchies of users at a Corporate level, Branch/Region, Department and individual basis. Imagine the spatial data results of this video as demonstrated using Google Earth, are instead rendering views of XRays, CTScan’s, etc. Hopefully, this is a compelling view of the realm of possibilities. One thing that might appear contrary to what I described earlier is the fact that in this video, various users know that a satellite exists when they didn’t have a need to know. Some satellites may be Top Secret, so unauthorized users have no need to know that that particular satellite even exists. To correct that situation vs what the video depicts, if the System of Engagement had requested sign on by the user first, they would not have seen the entire list of satellite’s as that may have been excluded by an additional database query of accessible satellites for that user. However, when comparing to medical devices, there is no secret that there are multiple imaging devices, but the results may not be visible to a user, based on the need to know. Many, many options exist. These are just examples to get the discussion started about new possibilities.

There’s another important aspect of this. Any business with DB2 on z/OS already has the System of Record capability. There is a change in operations management required, but no additional software license charges required to implement this. Other platforms are required to separate data (aka copy it) to facilitate the ease of compartmentalization available on DB2 for z/OS. Analytics can be provided against this system of record locally, by products such as the IBM Data Analytics Accelerator (IDAA) or Veristorm’s zDoop, which is a Hadoop solution running on the mainframe. The mainframe is capable of meeting the service level agreements of both the updates and queries of the database with very large scale.

The Systems of Engagement may be Linux or Windows systems running on Virtual Desktops or PC servers, as well as within Linux for System z or z/OS application and transaction processing environments. The end user access could be from kiosks (thin client terminals), Smart Devices, PC’s or business specific devices e.g. Point of Sale, ATM, police cruiser access points, etc. These systems could be hosted in a public or private cloud. They could be part of an existing system infrastructure. Authentication and access control should be centrally managed across the entire operational infrastructure.

The net of all this is a couple of examples of hybrid computing and collaboration across systems that can dramatically reduce the complexity and improve the efficiency of end to end business processes. If you are still compartmentalizing operations by server silos you may have the unintended consequence of missing some dramatic cost savings or better stated, cost avoidance. Compartmentalization on a need to know basis may initially lead a business toward separation of duties and separation/copying of data. But with the capabilities described, it’s actually a form of consolidation and collaboration that enables a greater degree of sharing the System of Record. You might not have to spend more in systems deployment to solve some very complex problems. Happy programming!